Optimized for Outrage

How Social Media Algorithms Are Rewiring Democracy

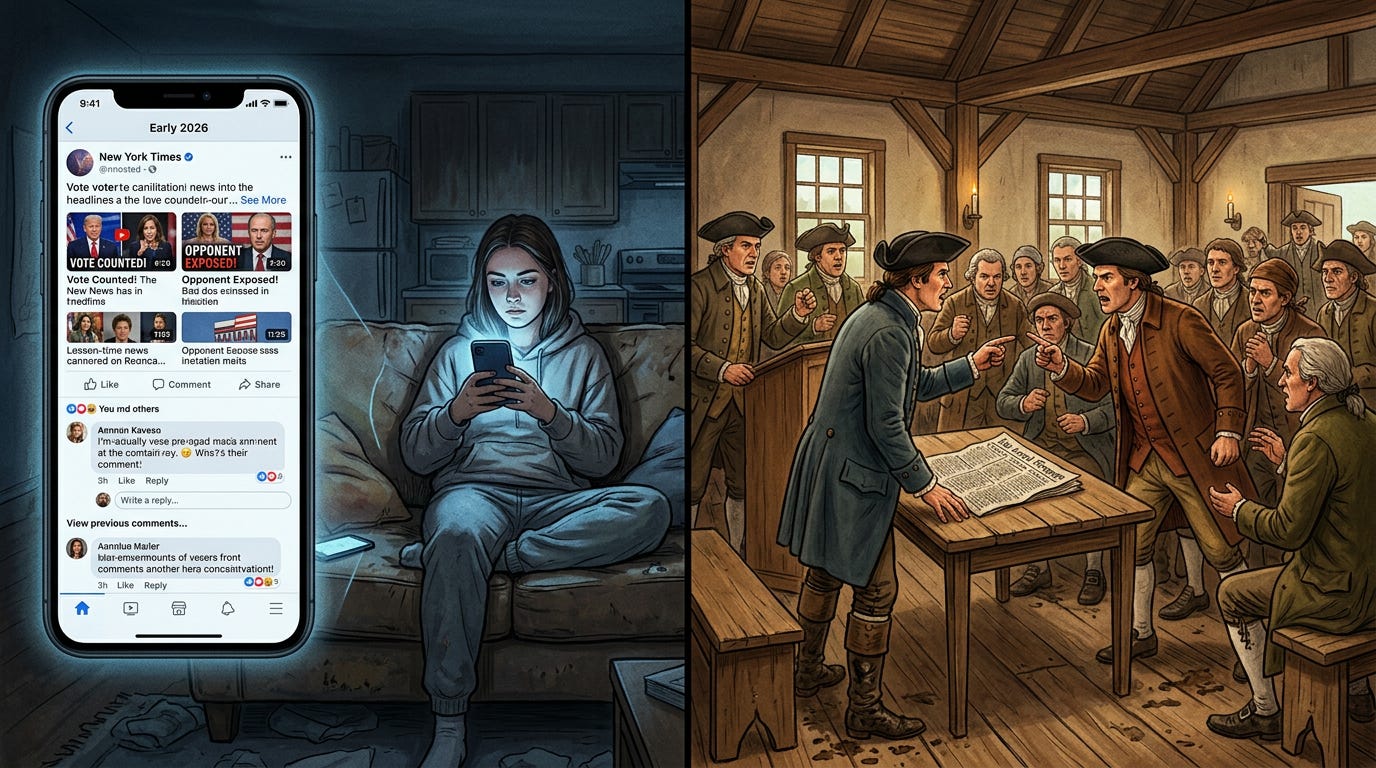

Picture a voter in early 2026 who has never once logged into a news site, never subscribed to a newspaper, and never deliberately chosen a political information source. And yet, she is extraordinarily well-informed — or at least feels that way. Her phone tells her what matters. It surfaces the stories that confirm her existing frustrations, amplifies the politicians who speak her language, and quietly buries the ones who don’t. She didn’t ask for any of this. She just kept scrolling.

Now contrast that with a different scene: a New England town meeting in the 1790s, muddy boots on a wooden floor, everyone in the same room arguing about the same set of facts — facts printed in the same imperfect, occasionally sensationalist local newspaper. Nobody confused that for utopia. There was plenty of tribalism, personal animosity, and misinformation even then. But everyone at least had to breathe the same air. They had to look across the room at the people they disagreed with.

The algorithm has eliminated that room.

This is not a story about social media being ‘bad.’ It’s a story about a specific and measurable design choice — optimizing digital information systems for engagement rather than civic function — and the ways that choice is quietly dismantling the basic infrastructure that representative democracy depends on. The town square still exists. It’s just been renovated by engineers whose only performance metric is how long you stay inside it. As Tristan Harris, the former Google design ethicist, put it at a Harvard Law School panel: ‘The algorithm has primacy over media, over each of us, and it controls what we do.’

Social media algorithms, engineered to maximize engagement and advertising revenue, systematically corrode the three structural pillars of representative democracy: a shared civic epistemology, genuine deliberation, and meaningful accountability between citizens and their representatives.

The Machine Behind the Feed

Most people imagine they choose what they see online. They don’t. Not really. The content that surfaces in your feed is selected, ranked, and sequenced by an algorithm whose primary job is to predict what will hold your attention longest. That’s the product. Not news. Not information. Not truth. Attention.

And attention, it turns out, is most reliably captured by a fairly narrow range of emotional stimuli: outrage, fear, tribal solidarity, and the pleasure of watching someone you dislike get embarrassed. A 2024 study published through the Knight First Amendment Institute at Columbia documented what many researchers had long suspected — that platforms consistently amplify divisive content not because their engineers are ideologically motivated, but because divisive content simply performs better on engagement metrics. A 2025 follow-up in PNAS Nexus confirmed the pattern: the content most likely to be amplified is the content most likely to trigger strong affective responses, particularly negative ones.

This is the business model. Platforms generate revenue through advertising. Advertising rates are tied to time-on-platform. Time-on-platform is maximized by emotional engagement. Emotional engagement is most reliably delivered through conflict. Therefore, conflict is what the algorithm serves, at scale, billions of times per day.

“Nobody sat in a conference room and decided to destabilize democracy. The damage is a byproduct of an incentive structure that is working exactly as designed — just for shareholders, not for citizens.”

We’ve Seen This Movie Before

It would be a mistake to pretend that democracy’s information ecosystem was ever pristine. The history of mass media is basically a history of periodic panics about whatever new technology was warping public opinion that decade.

In the 1890s, it was yellow journalism. William Randolph Hearst and Joseph Pulitzer ran newspapers that looked, in the idiom of the time, a lot like today’s most viral content — lurid, emotionally manipulative, designed to provoke rather than inform. Historians still debate how much Hearst’s coverage actually pushed the United States toward the Spanish-American War, but the pattern is familiar: sensationalism sold papers, and selling papers required sensationalism.

By the 1990s, the engagement algorithm was called talk radio. Rush Limbaugh and his imitators had figured out, purely through trial and error, that outrage kept listeners tuned in longer than measured analysis. The format rewarded the most provocative framing of every issue and helped drive a significant realignment in American political culture over roughly fifteen years.

Cable news’s 24-hour cycle arrived next, bringing with it the discovery that conflict between two screaming pundits was cheaper to produce and more engaging than actual investigative reporting. The incentives were financial, not ideological. The effects were political.

There’s a legitimate argument that we will adapt to social media algorithms too. Society has always adapted — imperfectly and slowly — each time a new medium disrupted the information order. But here’s where the historical parallel starts to break down. Yellow journalism, talk radio, and cable news were all broadcast media. They sent the same signal to millions of people simultaneously. Algorithmic social media is different in kind, not just degree. It is a personalized broadcast — a different channel for every single viewer, customized in real time to their specific psychology. The implications of that distinction for democratic governance are profound, and we are only beginning to understand them.

Pillar One: The Destruction of Shared Reality

Representative democracy has always rested on a set of assumptions about what citizens share. Not agreement — democracy doesn’t require agreement. It requires a shared arena in which disagreement can occur. You need to be arguing about the same facts, the same events, the same candidates, the same problems, even if you reach different conclusions. That shared epistemic commons, however imperfect, is what makes it possible for a democratic decision to mean anything.

Research on filter bubbles has been contentious — and fairness requires acknowledging that. A comprehensive 2022 literature review by the Reuters Institute at Oxford found that the evidence for algorithmically driven echo chambers is real but uneven, with strong effects for politically engaged users and weaker effects for casual ones. Some experimental studies have found that exposure to cross-cutting content doesn’t necessarily reduce polarization and can sometimes increase it. The picture is genuinely complicated.

But ‘complicated’ is different from ‘benign.’ A 2018 agent-based modeling study on filter bubble emergence found that even modest personalization effects compound over time to produce dramatically fragmented information environments, particularly for users who are already somewhat partisan. A field experiment published in Information, Communication and Society found that filter bubble effects on Twitter create measurable distortions in political perception, with users significantly overestimating the prevalence and extremism of opposing viewpoints.

The practical consequence is a citizenry in which two people living on the same street may be operating from information environments as different as two people living in different countries. They’re not just reaching different conclusions from the same facts. They’re not encountering the same facts at all. This isn’t primarily a problem of misinformation, though misinformation makes it worse. It’s a structural problem in how information is distributed, and that structure is the algorithm.

Pillar Two: Deliberation Replaced by Performance

Democratic theory, from Madison to Habermas, has always rested on something more than just voting. The assumption is that political positions are tested and refined through public deliberation — reasoned argument, exposure to opposing views, and the friction of having to defend your conclusions to someone who disagrees with them. The vote is the outcome of the conversation, not a substitute for it.

Algorithms are systematically replacing that conversation with something else. When platforms rank content by engagement, they structurally reward what performs well under engagement metrics. Nuanced, carefully qualified arguments don’t go viral. Incendiary takes do. A politician who says ‘this is a complex tradeoff with legitimate competing interests on both sides’ will be algorithmically outcompeted by one who says ‘they want to destroy everything you love.’ The second statement drives shares. The first one doesn’t.

A 2025 study in Frontiers in Political Science analyzed Twitter’s algorithmic communities in the lead-up to the 2020 elections and documented a striking finding: within algorithmically sorted communities, political discourse had largely shifted from deliberation to acclamation — users weren’t engaging with opposing arguments, they were applauding confirmations of beliefs they already held, with the algorithm efficiently delivering the next confirmation before the echo faded.

The microtargeting dimension makes this worse. Political campaigns learned early that the most cost-effective digital advertising strategy wasn’t persuading undecided voters — it was activating the most emotionally reactive members of the existing base. Cambridge Analytica made this famous and scandalous, but the underlying strategy was neither novel nor illegal. It was just a logical application of what the platform’s own business model incentivized. Target the outraged. Feed the outrage. Repeat.

“What you get, over time, is a political culture optimized for activation rather than persuasion. That’s not the same as democracy.”

Pillar Three: The Accountability Gap

The third structural damage runs downstream from the first two. Representative democracy depends on an accountability loop: citizens assess what their representatives do, form informed opinions about it, and use electoral mechanisms to reward or punish accordingly. Break the information environment badly enough, and that loop stops functioning.

A February 2026 study published in Nature analyzed the political effects of X’s (formerly Twitter’s) feed algorithm across a large sample of users and found that algorithmic ranking significantly altered political attitudes and voting-related behavior, with effects concentrated among highly partisan users most exposed to algorithmically amplified content. An earlier study from George Washington University found that social media feed algorithms measurably shift voter attitudes and behavior during election campaigns, with users who consume primarily algorithmically curated content showing greater partisan affective polarization — meaning they increasingly dislike the opposing party more than they like their own.

There’s a representative behavior dimension here too. Elected officials are not immune to algorithmic incentives. They live on these platforms. They watch which posts go viral and which disappear. They learn, quickly and viscerally, that substantive policy content performs poorly while provocative cultural commentary performs extremely well. The rational response, for a politician focused on visibility and fundraising, is to behave more like a content creator and less like a legislator. The result is what you might call the ‘attention primary’ — a competition for algorithmic visibility that runs continuously between elections and rewards the most extreme, performative behavior.

A 2022 longitudinal study of the Australian Twittersphere found that echo chamber dynamics had intensified over the study period, with partisan sorting becoming more pronounced and cross-cutting conversations becoming rarer. The trend suggests these effects aren’t stabilizing — they’re deepening as platforms become more sophisticated at predicting and delivering what users will engage with. The downstream consequences are visible in the U.S. Congress: bipartisan legislative accomplishment has become the exception rather than the norm, even on issues where polling suggests broad public agreement.

Why the Fixes Keep Failing

There have been no shortage of proposed solutions. Section 230 reform has been debated for years without resolution. The European Union’s Digital Services Act took effect in 2024 and imposed some transparency and audit requirements on large platforms, but its enforcement mechanisms remain contested and its scope is limited by jurisdictional boundaries. Meta, X, TikTok, and YouTube have all announced periodic algorithmic adjustments in response to public pressure, usually without independent verification or meaningful accountability.

The Brookings Institution has documented the core problem: platforms have no structural financial incentive to reduce engagement-driven polarization because polarization drives engagement. Voluntary reform, in this environment, is limited by competitive dynamics. If one platform reduces outrage amplification and loses user time to a competitor that doesn’t, it loses advertising revenue. The market mechanism, left to itself, selects for the most engagement-maximizing behavior, which is not the same as the most democratically beneficial behavior.

There is genuine promise in some of the research. A 2025 Stanford study found that giving users meaningful control over their own feed ranking — allowing them to reduce partisan content, for example — measurably lowered affective polarization without reducing user satisfaction. A field experiment published in Science found that simply reranking feeds to reduce partisan animosity content produced measurable reductions in polarization among exposed users. The technical capacity to build less polarizing platforms appears to exist. What’s missing is the business rationale to deploy it.

This is where a combination of regulatory standards and market accountability matters. Platforms can implement algorithmic adjustments voluntarily while complying with transparency and audit frameworks that allow independent researchers and regulators to verify the effects. The EU’s DSA framework, despite its limitations, points in this direction. The question is whether democratic institutions can establish enforceable standards faster than the damage compounds.

The Memory Problem We Keep Ignoring

Much of the public debate about social media and democracy is implicitly shaped by a nostalgic memory of a pre-algorithmic political culture that was fairer, more deliberative, and more functional than the one we have now. That memory is selective. American democracy has always struggled with tribalism, misinformation, and the manipulation of public opinion by media organizations with financial incentives to sensationalize. The yellow press, the smoke-filled room, the McCarthy hearings, the Willie Horton ad — none of these required an algorithm.

The danger in nostalgia is that it points us toward the wrong solutions. If the problem were simply that social media is ‘too powerful,’ the answer would be to make it less powerful — regulate it, break it up, tax it, ignore it. But the problem is more specific than that. It’s that a particular design choice, made by profit-maximizing companies, has structurally compromised the information conditions that democratic governance requires. That’s an operational problem with operational solutions, not a yearning-for-the-good-old-days problem.

The genuinely difficult question is whether democratic institutions can evolve fast enough to impose those operational constraints on an economic model that profits from their absence. History offers modest grounds for optimism. Regulatory frameworks for broadcast media did eventually emerge, imperfect as they were. Financial regulation adapted to novel instruments after enough damage accumulated. The arc is long and the adjustment is usually painful and partial.

But the speed differential matters. Algorithms operate at machine speed. Democratic accountability operates at human speed. Every month of delay is another month of polarization compounding, deliberation eroding, and the accountability loop degrading. We are not running out of ideas for how to address this. We may, however, be running out of time in which those ideas remain useful.

The town square hasn’t disappeared. It’s been A/B tested, monetized, and optimized. And the version of it that generates the most revenue turns out to be a very poor venue for self-governance.

Sources & References

Peer-Reviewed Studies

Bail, C. A., et al. (2018). Exposure to opposing views on social media can increase political polarization. PNAS.

Cinelli, M., et al. (2021). The echo chamber effect on social media. PNAS.

Guess, A., et al. (2023). Resharing, engagement, and the amplification of divisive content on social media. Knight First Amendment Institute at Columbia University.

Huszar, F., et al. (2022). Algorithmic amplification of politics on Twitter. PNAS.

Moller, J., et al. (2025). Reranking partisan animosity in algorithmic social media feeds alters affective polarization. Science, Nov 2025.

Nyhan, B., et al. (2025). Social media research tool lowers the political temperature. Stanford Internet Observatory / Nature, Nov 2025.

Pansanella, V., et al. (2025). From deliberation to acclamation: how did Twitter’s algorithms foster political polarization before the 2020 elections. Frontiers in Political Science, Jan 2025.

Ribeiro, M. H., et al. (2025). Engagement, user satisfaction, and the amplification of divisive content on social media. PNAS Nexus, Feb 2025.

Tromble, R., et al. (2026). The political effects of X’s feed algorithm. Nature, Feb 2026.

Zuiderveen Borgesius, F., et al. (2021). Algorithms, manipulation, and democracy. Canadian Journal of Law and Technology (Cambridge University Press), Nov 2021.

Reports & Institutional Sources

Brookings Institution. (2024, July). How tech platforms fuel U.S. political polarization and what government can do about it.

George Washington University Institute for Data, Democracy & Politics. (n.d.). How do social media feed algorithms affect attitudes and behavior in an election campaign?

Harvard Law School. (2023, May). ‘The algorithm has primacy over media, over each of us.’ Panel remarks by Tristan Harris.

Reuters Institute for the Study of Journalism, Oxford. (2022, Jan). Echo chambers, filter bubbles, and polarisation: a literature review.

UC Berkeley Fung Institute for Engineering Leadership. (2022, May). Social media algorithms and their effects on American politics. Op-ed.

Limitations & Gaps

1. Microtargeting and behavioral data sourcing (Cambridge Analytica and successors) are referenced conceptually but not empirically sourced in depth — this represents an acknowledged gap.

2. The historical media analogies (yellow journalism, talk radio) are broadly documented claims, not sourced to primary historical scholarship in this piece.

3. Non-U.S. democratic contexts are referenced briefly but not analyzed in depth. Readers seeking comparative international analysis should consult the EU DSA enforcement literature directly.

4. Claims about Australian Twitter behavior are based on a 2022 longitudinal study; the platform has since rebranded and changed its algorithm. Effects may differ on current X.

All temporal claims verified through March 9, 2026.

AI Disclosure

This article was drafted with AI-assisted research and structural support, with human authorial oversight, editorial judgment, and voice throughout. Final content reflects the author’s perspective and analysis. Per editorial standards, AI-generated content comprises less than 50% of the published text.

Related Reading

1. ‘The Algorithmic Memory of Democracy’ — The Past Tense of Tomorrow

2. ‘Digital Democracy’s Fatal Flaw: Optimizing for Engagement, Not Deliberation’ — PTT Article #113 (Proposed)

3. ‘The Automation of Propaganda: From Human Persuaders to Bot Networks’ — PTT Article #128 (Proposed)

4. ‘Can Democratic Systems Regulate Technologies They Don’t Understand?’ — PTT Article #135 (Proposed)

5. Brookings Institution: How Tech Platforms Fuel U.S. Political Polarization (2024)